Meta description: Discover Edge AI, the revolution that brings artificial intelligence to your devices. Benefits, key technologies, and practical implementation guide.

Introduction: AI Lands in Your Pocket

Imagine a world where your smartphone instantly recognizes your face without sending your data to a remote server, where your autonomous car reacts to obstacles in milliseconds without an internet connection, where your connected devices work perfectly even during network outages. This world is no longer science fiction: it’s Edge AI.

Edge AI, or artificial intelligence at the edge, represents a fundamental shift in how we design and deploy AI. Rather than centralizing computing power in massive cloud data centers, this revolutionary approach moves intelligence directly to the devices we use daily.

This technological revolution is redefining the rules of the game for businesses and opening new innovation perspectives. But why is this trend gaining such momentum? And how can you leverage it in your projects?

What is Edge AI? Definition and Strategic Stakes

Understanding Edge Computing Applied to AI

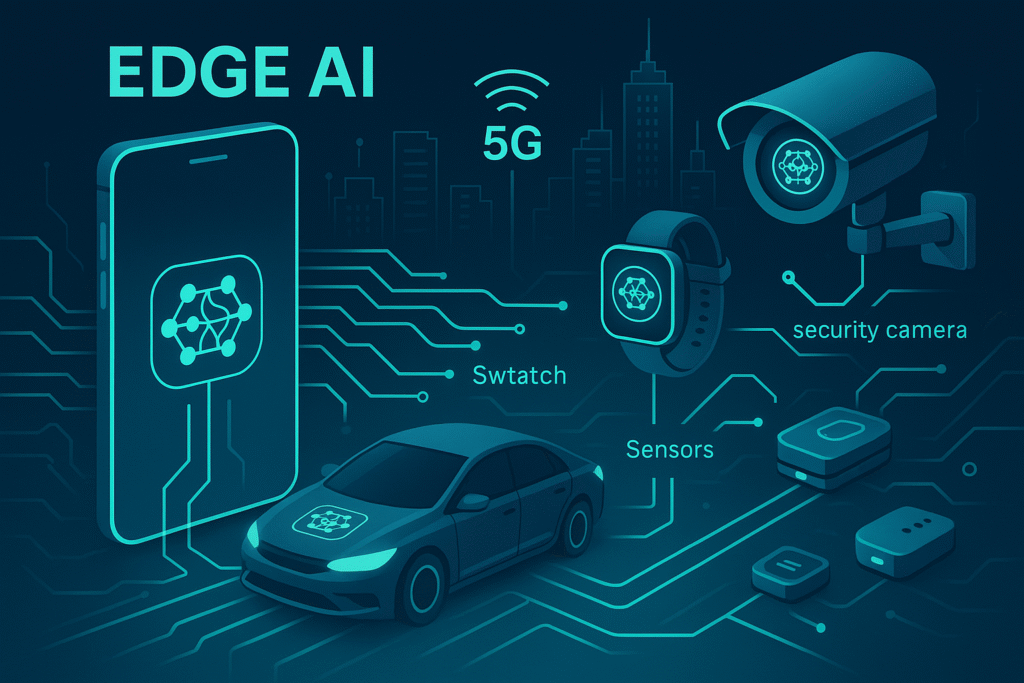

Embedded AI, also known as edge artificial intelligence or on-device AI, combines two major technological concepts: edge computing and artificial intelligence. Concretely, it involves executing AI algorithms directly on end devices (smartphones, IoT sensors, surveillance cameras, vehicles) rather than in the cloud.

This decentralized approach transforms each device into an intelligent mini-processing center, capable of making autonomous decisions in real-time. Local AI revolutionizes the traditional AI architecture.

![Cloud AI vs Edge AI comparative diagram] Architectural comparison: centralized vs distributed processing

Business Stakes of Edge AI

The on-device artificial intelligence market is experiencing explosive growth. According to Grand View Research analysts, it should reach $59.6 billion by 2030, with an annual growth rate of 20.8%.

![Edge AI market growth chart 2025-2030] Global Edge AI market evolution (source: Grand View Research)

This expansion of decentralized artificial intelligence is explained by several strategic factors:

- Operational cost reduction: less bandwidth consumed, fewer cloud servers needed

- New monetization opportunities: AI services working offline, enriched user experiences

- Regulatory compliance: GDPR compliance and local data protection regulations

- Competitive differentiation: superior performance and innovative features

The 4 Decisive Advantages of Edge AI

1. Ultra-low Latency: Real-time Responsiveness

Latency represents the processing time between a request and its response. With Edge AI, this latency drops dramatically:

- Cloud AI: 100-500 milliseconds (including network round-trip)

- Edge AI: 1-10 milliseconds (local processing)

This difference is crucial for applications like autonomous driving, where a few milliseconds can make the difference between an avoided accident and a collision.

2. Privacy Protection: Your Data Stays Home

Edge artificial intelligence revolutionizes privacy by keeping sensitive data on the device. No more need to send your photos, voice, or biometric data to external servers.

Concrete benefits include:

- Automatic GDPR compliance

- Reduced risk of data piracy in transit

- Total control over personal information usage

- Enhanced user trust

3. Dramatic Infrastructure Cost Reduction

Local AI enables substantial savings:

- Bandwidth: 40 to 90% network traffic reduction

- Cloud storage: less data to host long-term

- Servers: optimized sizing of centralized infrastructures

- Energy: reduced data center consumption

4. Offline Operation: Total Autonomy

Unlike cloud solutions, on-device AI works even without internet connection. This autonomy opens new use cases:

- Industrial applications in isolated environments

- Emergency services during network outages

- Deployments in areas with poor mobile coverage

- Enhanced resilience of critical systems

Key Technologies and Development Frameworks

TensorFlow Lite: Google’s Mobile AI

TensorFlow Lite is the reference framework for deploying embedded AI models on mobile and edge devices. Developed by Google, it represents the optimized version of TensorFlow for edge intelligence.

![TensorFlow Lite architecture diagram] TensorFlow Lite optimized architecture for Edge AI

Key characteristics:

- Optimized size: models compressed up to 75% compared to standard TensorFlow

- Hardware acceleration: GPU, NPU, and DSP support

- Multi-platform: Android, iOS, embedded Linux, microcontrollers

- Simplified APIs: easy integration into applications

ONNX Runtime: Universal Portability

ONNX (Open Neural Network Exchange) offers maximum interoperability between AI frameworks. This open-source initiative, supported by Microsoft, Facebook, and many others, democratizes local AI deployment across different platforms.

ONNX Runtime enables executing these models on edge devices with:

- Support for all major frameworks (PyTorch, TensorFlow, scikit-learn)

- Automatic optimizations for each hardware architecture

- Unified deployment on CPU, GPU, and specialized accelerators

- Optimal performance through specific optimizations

Core ML: The Apple Ecosystem

Apple’s Core ML natively integrates embedded AI into the iOS/macOS ecosystem with unique advantages for edge artificial intelligence:

- Neural Engine: dedicated acceleration on Apple chips

- System integration: native iOS/macOS APIs

- Privacy by design: local processing by default

- Development tools: integrated Xcode and Create ML

Concrete Use Cases and Application Sectors

Smartphone AI: The Assistant in Your Pocket

Modern smartphones embed more and more native AI features:

Computational Photography:

- Real-time scene recognition

- Portrait mode with background blur

- Automatic image quality enhancement

- Unwanted object detection and removal

Offline Voice Assistants:

- Voice recognition without connection

- Local natural language processing

- User-personalized responses

- Complete privacy protection

Internet of Things (IoT): Distributed Intelligence

Edge AI transforms connected objects into autonomous intelligent devices:

Smart Cities:

- Surveillance cameras with automatic incident detection

- Traffic sensors optimizing lights in real-time

- Intelligent parking systems

- Environmental monitoring with automatic alerts

Industry 4.0:

- Predictive equipment maintenance

- Automated quality control through industrial vision

- Real-time energy optimization

- Defect detection on production lines

Autonomous Vehicles: Critical AI in Motion

Autonomous vehicles represent one of the most demanding on-device AI use cases:

- Obstacle detection: real-time recognition of pedestrians, vehicles, signage

- Critical decision-making: instant reactions to emergency situations

- Sensor fusion: combining LIDAR, camera, and radar data

- Continuous learning: performance improvement based on experience

Practical Tutorial: Deploying an AI Model on Raspberry Pi

Prerequisites and Environment Setup

For this tutorial, you’ll need:

- Raspberry Pi 4 (4GB RAM minimum recommended)

- 32GB Class 10 SD card

- Raspberry Pi camera or USB webcam

- Raspberry Pi OS installed and configured

Dependencies Installation

# System update

sudo apt update && sudo apt upgrade -y

# Python and pip installation

sudo apt install python3-pip python3-venv -y

# Virtual environment creation

python3 -m venv edge-ai-env

source edge-ai-env/bin/activate

# TensorFlow Lite installation

pip install tflite-runtime opencv-python numpy pillow

Object Detection Model Deployment

Let’s create a real-time object detection script:

import cv2

import numpy as np

from tflite_runtime.interpreter import Interpreter

from PIL import Image

# TensorFlow Lite model loading

interpreter = Interpreter(model_path="detect.tflite")

interpreter.allocate_tensors()

# Get input and output details

input_details = interpreter.get_input_details()

output_details = interpreter.get_output_details()

# Camera configuration

cap = cv2.VideoCapture(0)

cap.set(cv2.CAP_PROP_FRAME_WIDTH, 640)

cap.set(cv2.CAP_PROP_FRAME_HEIGHT, 480)

while True:

ret, frame = cap.read()

if not ret:

break

# Image preprocessing

rgb_frame = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB)

resized = cv2.resize(rgb_frame, (320, 320))

input_data = np.expand_dims(resized, axis=0)

input_data = (input_data / 255.0).astype(np.float32)

# Inference

interpreter.set_tensor(input_details[0]['index'], input_data)

interpreter.invoke()

# Results retrieval

boxes = interpreter.get_tensor(output_details[0]['index'])

classes = interpreter.get_tensor(output_details[1]['index'])

scores = interpreter.get_tensor(output_details[2]['index'])

# Detection display

for i in range(len(scores[0])):

if scores[0][i] > 0.5: # Confidence threshold

# Detection box display code

y1 = int(boxes[0][i][0] * frame.shape[0])

x1 = int(boxes[0][i][1] * frame.shape[1])

y2 = int(boxes[0][i][2] * frame.shape[0])

x2 = int(boxes[0][i][3] * frame.shape[1])

cv2.rectangle(frame, (x1, y1), (x2, y2), (0, 255, 0), 2)

cv2.putText(frame, f'Object: {scores[0][i]:.2f}',

(x1, y1-10), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 255, 0), 2)

cv2.imshow('Edge AI Detection', frame)

if cv2.waitKey(1) & 0xFF == ord('q'):

break

cap.release()

cv2.destroyAllWindows()

Performance Optimization

To optimize performance on Raspberry Pi:

- Use quantized models: size reduction and computation acceleration

- Limit input resolution: balance between precision and speed

- Enable multi-threading: optimal use of Pi 4’s 4 cores

- Consider Coral AI: USB accelerator for maximum performance

Model Optimization Techniques for Edge

Quantization: Reducing Precision for Speed Gains

Quantization converts model weights from float32 to int8, reducing size by 75% and accelerating inference. This optimization technique is crucial for embedded AI on resource-constrained devices.

![Quantization diagram float32 to int8] Quantization process: from float32 to int8 precision

Post-training quantization with TensorFlow Lite:

import tensorflow as tf

# Conversion with quantization

converter = tf.lite.TFLiteConverter.from_saved_model('model_path')

converter.optimizations = [tf.lite.Optimize.DEFAULT]

tflite_model = converter.convert()

# Optimized model saving

with open('model_quantized.tflite', 'wb') as f:

f.write(tflite_model)

Pruning: Eliminating Unnecessary Connections

Pruning removes unimportant neural network connections, reducing complexity without major precision impact. This AI model optimization technique is particularly effective for local artificial intelligence.

![Neural network pruning visualization] Before/after pruning: elimination of non-essential connections

Benefits of pruning for edge AI:

- 50 to 90% parameter reduction

- Proportional inference acceleration

- Memory consumption decrease

- Quantization compatibility

Knowledge Distillation: Transferring Knowledge

This technique trains a compact model (student) to reproduce the performance of a complex model (teacher).

The process involves:

- Teacher model training: performant but voluminous model

- “Soft targets” generation: teacher’s output probabilities

- Student training: learning based on soft targets

- Fine-tuning: final optimization on real data

Hardware Ecosystem: Dedicated Chips and Accelerators

Neural Processing Units (NPU): Native AI

NPUs represent a new generation of processors specialized in AI:

Implementation examples:

- Apple Neural Engine: up to 15.8 TOPS on A15 Bionic

- Qualcomm AI Engine: optimized CPU+GPU+DSP integration

- Google Tensor: custom NPU for Pixels

- Huawei Kirin NPU: Da Vinci architecture dedicated to AI

Google Coral and Edge TPU: USB Acceleration

The Google Coral Dev Board and Edge TPU USB offer exceptional performance for embedded AI at an accessible cost:

![Google Coral Edge TPU USB photo] Google Coral Edge TPU: compact AI accelerator in USB format

- Performance: 4 TOPS (Tera Operations Per Second)

- Consumption: only 2W consumption

- Compatibility: native TensorFlow Lite support

- Ease of use: plug-and-play on Raspberry Pi

Intel Neural Compute Stick: Portable AI

The Intel Neural Compute Stick 2 (NCS2) democratizes access to local AI accelerators:

- Compact and portable USB format

- OpenVINO toolkit support

- Performance up to 8x superior to CPU

- Accessible price for prototyping

Edge AI Challenges and Limitations

Hardware and Energy Constraints

Embedded AI must deal with limited resources, unlike traditional cloud solutions:

![Cloud vs Edge resource comparison] Resource constraints: unlimited Cloud vs constrained Edge

- Restricted memory: crucial model optimization

- Limited computing power: adapted architecture choices

- Energy consumption: mobile device autonomy

- Heat dissipation: heat management in confined space

Updates and Maintenance Management

Large-scale deployment raises operational challenges:

- Model updates on millions of devices

- Production performance monitoring

- Version management and compatibility

- Rollback in case of critical issues

Security and Model Protection

Edge AI introduces new security risks:

- Model stealing: model extraction from devices

- Adversarial attacks: data poisoning attacks

- Privacy leakage: information inference from models

- Tampering: malicious behavior modification

The Future of Edge AI: Trends and Perspectives

Convergence with 5G and Edge Computing

5G catalyzes Edge AI adoption by enabling:

- Ultra-low latency (< 1ms) for critical applications

- Massive bandwidth for model synchronization

- Network slicing to isolate AI flows

- Distributed edge computing at antenna level

Federated AI: Collaborative and Private Learning

Federated learning combines the advantages of local AI and collective intelligence:

![Federated learning diagram] Federated learning architecture: collaboration without data sharing

- Decentralized training without data sharing

- Continuous improvement of edge models

- Total privacy preservation

- Resilience to centralized failures

AutoML for Edge: AI Democratization

Specialized Edge AutoML tools simplify development:

- Automatic generation of optimized models

- Adaptation to specific hardware constraints

- Automated deployment pipeline

- Continuous monitoring and optimization

FAQ: Frequently Asked Questions about Edge AI

What’s the difference between Edge AI and Cloud AI?

Edge artificial intelligence executes algorithms directly on the user device, while Cloud AI processes data on remote servers. Embedded artificial intelligence offers lower latency, better privacy, and offline operation, but with limited computing resources.

What are the technical prerequisites for implementing Edge AI?

Prerequisites include devices with sufficient memory (minimum 1-2GB), a compatible processor (ARM or x86), and ideally an AI accelerator (NPU, GPU). Software-wise, you need to master frameworks like TensorFlow Lite, ONNX Runtime, or Core ML depending on your target ecosystem.

Can Edge AI completely replace Cloud AI?

No, embedded AI and Cloud AI are complementary. Edge artificial intelligence excels for real-time tasks sensitive to privacy, while Cloud AI remains superior for complex calculations, large model training, and big data analysis.

Which sectors benefit most from Edge AI?

Sectors most impacted by local AI are automotive (autonomous vehicles), healthcare (connected medical devices), industry (predictive maintenance), security (intelligent video surveillance), and smartphones (voice assistants, computational photography).

How to evaluate the ROI of an Edge AI project?

ROI is calculated by comparing benefits (cloud cost reduction, improved user experience, new revenue) to investments (development, specialized hardware, training). Bandwidth and cloud infrastructure savings are often the first positive indicators.

Conclusion: Prepare for the Edge AI Revolution

Embedded AI is no longer an emerging trend but a technological reality that’s already transforming our daily lives. From smartphones that recognize our faces to cars that autonomously avoid accidents, edge artificial intelligence is inexorably approaching us.

This local AI revolution presents considerable opportunities for visionary businesses. Cost reduction, performance improvement, privacy respect, and new user experiences: the strategic advantages are multiple and measurable.

![Edge AI benefits summary infographic] Summary of embedded artificial intelligence benefits

However, successfully transitioning to distributed AI requires a methodical approach. You must master key technologies, understand hardware constraints, and develop technical skills adapted to edge computing.

Your action plan to get started:

- Evaluate your current use cases: identify applications that would benefit from Edge AI

- Train your teams: invest in skills development on TensorFlow Lite, ONNX Runtime

- Experiment with prototypes: test on Raspberry Pi or with Google Coral kits

- Measure performance: compare latency, costs, and user experience

- Plan your deployment: define your scaling strategy

The future of artificial intelligence is being played out now on your devices. Don’t miss this embedded AI revolution that will redefine how we interact with technology in the coming years.

Ready to take the step toward edge AI? Start your first local artificial intelligence project today and position your company at the forefront of this major technological transformation.

Additional Resources: